- SS&C Blue Prism Community

- Get Help

- Digital Exchange

- RE: Working with large CSV files? Just chunk it! :...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Working with large CSV files? Just chunk it! :-)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

24-02-22 12:28 AM

- The Blue Prism executable is a 32-bit process and thus limited to a maximum of 2GB of addressable memory.

- Many of the actions, in existing VBOs, are designed to read the entire CSV file at one time.

There are a few ways to handle these large CSV files. The one I'll focus on here is chunking. What is chunking? To understand chunking, consider the following example:

Example

You have a CSV file containing 1 million records of sales data with each record containing 14 columns. You need to read that data and identify every record for company XYZ. How might you go about it?

The standard solution comes down to using either the MS Excel or Utility - File Management VBOs to read/import the CSV file contents in one step. While this works fine in cases where the CSV file is relatively small, it begins to strain as the file size and record/column count increase.

Chunking

To address this scenario, we have recently added a new action to the Utility - File Management VBO called Get CSV Text as Collection - Chunk. This new action is similar to the existing Get CSV Text as Collection action except that instead of trying to read the entire CSV at one time it will read a subset of the records contained in the CSV (i.e. a chunk).

To use the action, you pass in the following parameters:

- CSV File Path - The full path and name of the CSV file.

- First Line is Header - A flag indicating if the first line of the CSV is a column header line.

- Starting Row - The row number where reading of the CSV data should start. Note: This value is zero-indexed meaning the first data record is row #0.

- Chunk Size - A number indicating the size (i.e. number of records) to read in the chunk.

Assuming the file exists, the action will open the file, pull out the required rows of data, and return that smaller collection along with a value showing the number of records that were actually read (in case the Chunk Size is larger than the number of available/remaining records) and the number of the next record where a subsequent call to this action should begin reading for the next chunk of data.

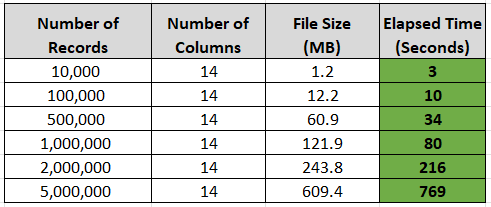

I've conducted some tests using CSV files ranging from 10K to 5M records (14 columns of data per record). Below is a screenshot of the elapsed times to read the various test files.

I tested both options of reading the data (non-chunked and chunked). For the tests up to, and including, 100K records, non-chunked reading worked fine. Beginning with the 500K test, and onward, memory issues arose. From 1M onward, the test failed every time unless the chunking action was used.

Note 1: The times shown are just for reading the data from the CSV file, populating the Collection, and returning the collection to the calling process. Additional time would be required to then loop through the collections to perform whatever business processing is necessary (ex. looking for company XYZ records).

Note 2: These tests were conducted on a 3.5 year old Dell Latitude 7490 with a Core i7-8650U CPU and 16GB of RAM.

Note 3: The memory issues don't magically show up at record 100,001. It varies depending on the system you're running and it's specs. And there's a pretty significant jump between my test at 100K and 500K records.

Not exactly lightning fast, but for a Digital Worker I'd say more than respectable. And best of all, no System.OutOfMemory exceptions. 😉

As I mentioned above, there are other options for addressing this issue including:

- Reading the CSV line-by-line and loading a work queue.

- Using a Code stage and possibly Linq.

- etc

In the future I may write additional articles discussing some of the other options. For now, if you're experiencing this issue, consider downloading the latest version of the Utility - File Management VBO (v6.10.4) from the Digital Exchange and see how it works for you.

P.S. - I've attached the test process that I used. You'll need to furnish your own CSV test files, or you can download the same files I used. They are available on the site E for Excel.

Cheers,

------------------------------

Eric Wilson

Director, Integrations and Enablement

Blue Prism Digital Exchange

------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

24-02-22 05:11 AM

------------------------------

----------------------------------

Regards,

Devneet Mohanty

Intelligent Process Automation Consultant | Sr. Consultant - Automation Developer,

Wonderbotz India Pvt. Ltd.

Blue Prism Community MVP | Blue Prism 7x Certified Professional

Website: https://devneet.github.io/

Email: devneetmohanty07@gmail.com

----------------------------------

------------------------------

Hope this helps you out and if so, please mark the current thread as the 'Answer', so others can refer to the same for reference in future.

Regards,

Devneet Mohanty,

SS&C Blueprism Community MVP 2024,

Automation Architect,

Wonderbotz India Pvt. Ltd.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

24-02-22 03:32 PM

This is really great stuff.

Thanks for sharing.

jack

------------------------------

Jack Look

Sr Product Consultant

Blue Prism

------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

02-05-22 03:50 PM

Hi Eric, this is really promising!

Is there any way for me to change the encoding options? It doesn't really like my CSV files because of special characters.

Hálsaþing 4;203;1116498;1000;Kópavogsbær;18.04.2011 00:00;02.05.2011 09:18;48000;44450;2007;10101;244,1;480;m²;Serbyli;1;0

------------------------------

Atli Harðarson

------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

02-05-22 06:59 PM

Can you elaborate on your situation? Are you receiving an error or exception? If so, can you share the details? There is a CharacterSet value that can be added to the OleDbConnectionString that's created within the Code stage that might help here. Here's an example of what the connection string might look like:

Using cn As New OleDbConnection("Provider=Microsoft.Jet.OLEDB.4.0; Data Source=" & Folder & ";Extended Properties=""Text;HDR=" & HDRString & ";Format=Delimited;CharacterSet=UTF8;""")Cheers,

------------------------------

Eric Wilson

Director, Integrations and Enablement

Blue Prism Digital Exchange

------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

03-05-22 09:02 AM

Very useful article for working with large CSV files. Thanks for sharing !!

------------------------------

Manpreet Kaur

Manager

Deloitte

------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

03-05-22 12:03 PM

Thanks Eric, solved the UTF-8

Had to change UTF-8 to 65001, now also trying to solve the ";" delimiter 🙂

Using cn As New OleDbConnection("Provider=Microsoft.Jet.OLEDB.4.0; Data Source=" & Folder & ";Extended Properties=""Text;HDR=" & HDRString & ";Format=Delimited;CharacterSet=65001;""")------------------------------

Atli Harðarson

------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

03-05-22 12:11 PM

Try changing Format=Delimited to Format=Delimited(;).

Cheers,

------------------------------

Eric Wilson

Director, Integrations and Enablement

Blue Prism Digital Exchange

------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

12-06-23 11:26 AM

Thanks for this post, really helpful.

However, I have a question. How to work with such large xlsx/csv file in a situation where I need to find one record according to the X criteria, then find another record according to the Y criteria, etc. I have the criteria saved in another xlsx/csv file, which has about 600-1000 rows.

Could you help?

------------------------------

Marcin Grzelak

Blue Prism Developer

TSYS Managed Services EMEA Limited

Europe/London

------------------------------

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

27-03-24 08:47 AM

Hi @ewilson, I need to add additional field to the CSV with a single value in the entire field. tried with the above method and getting "internal : Could not execute code stage because exception thrown by the code stage : Unspecified error" this error.

The file I was handling will have nearly 20k rows and 90 to 100 columns depends on the case. Can anyone please suggest me the best way to read this file.

- BluePrism script building Agent in Product Forum

- Memory Management in Long-Running Blue Prism Processes in Product Forum

- Outlook Email VBO: Read From MSG Fails to Convert New Outlook MSG Files to HTML in Product Forum

- Sharepoint Graph APi - issue with Move/copy file in Product Forum

- Handling large CSV file in Product Forum