- SS&C Blue Prism Community

- Get Help

- Product Forum

- Decipher minimum requirements: performance problem...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Decipher minimum requirements: performance problems

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

27-04-23 12:28 PM

Hi,

I am facing a performance problem with decipher, which provokes the web server to respond very slow during manual Data Verification, sometimes losing the connection with the server and the unsaved progress of the verification.

We use Decipher to extract data from documents of 10-15 pages long. When 3-4 of those documents are sent to Dechiper at the same time the CPU syarts running at 100% for several minutes (almost half hour) and the web server becomes slow and unstable.

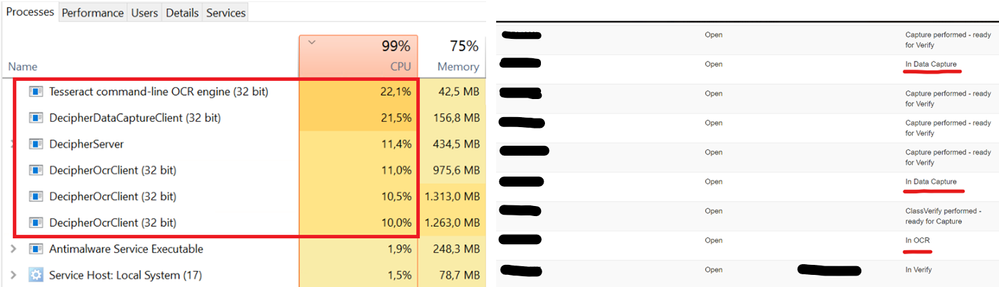

Here one screenshot of the situation: The task manager and the batches in Decipher (Admin panel>Batches)

From the screenshot we can see 4GB of RAM are being used by Decipher (arround 1.2GB per document + 434Mb for the web server)

Decipher minimum requirements docs says 1GB is enough for 10,000 pages per day. I made some basic maths: 24 x 60 x 60 / 10000 = 8.54 seconds / page

But the time to process a 10 pages document is far from 85.4 seconds, it is instead close to 10 minutes, is that normal? We expect to process around 160 docs of this type per day in a near futue.

Our documents have +200 fields to extract. Which factor affects the most to performance? number of pages or number of fields to extract?

Here the specs of our environment:

- Intel Xeon E5-2697 v4 2.30GH (4 cores)

- 16GB RAM

- NLP Plugin not installed

- We have defined 8 Capture models, but none of them was being trained during the screenshot

Our plan is to scale up the number of documents in Decipher. Thats why I know we need to increase our server resources but don't know how much is really needed.

If we have get an 8-core CPU, will that mean if I send 8 docs to decipher it will use 1.2 x 8 GB (9.6GB) of RAM?

Are all processes of Decipher fully parallelable or is it better to send a large number of batches little by little in order not to saturate the server?

Thank you very much in advance

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

01-05-23 02:19 PM

Hi Oroel.

Are you running all of the Decipher components on a single server? The Decipher Automated Client service does most of the work and it is recommended (in environments other than a sandbox) that you distribute the components across multiple servers. It is also recommended that you have at least two automated client services running on different servers. Decipher will round-robin the workload between the two services to help with throughput.

Also, I believe it is better to run small batches. This, in addition to the multiple automated client services, will keep a higher throughput.

This link will be helpful: Multi-device deployment (blueprism.com)

jack

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

04-05-23 11:44 AM

Hi @Jack Look Thanks for your reply.

We are running all components in a single server (except for SQL Server) and would like to keep it so, although we understand a multiserver installation would be beneficial.

I Checked the Sizing Guides. Our usage size of decipher is around "Medium", but have a question:

In the first column the table says: "Sizing numbers are per VM. HA and DR sizing are pro rata". 4 different CPU-RAM-HDD configurations are given per size (per row). Does the table asume we are running Dechiper Components in 4 Virtual Machines with a multiserver instalation? Or do we only need to have at least the most powerful configuration of "Medium" if we have a single MV instalation?

So, what are then the minimum requirements for a single MV instalation with a usage size of "Medium"?

I also don't understand what "HA" and "DR" means, it is not explained. Sorry for the unknowledge.

Thank you for the help.

P.S.: I just realized this post should have been an open discussion instead of a closed question, sorry

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Email to a Friend

- Report Inappropriate Content

04-05-23 02:10 PM

Hi Oroel.

The sizing guide does assume you are running in a multi-VM environment with the various components on each of the VMs. We do not recommend running the Decipher stack on a single VM except for low-usage environments like dev or a POC. I understand you want to keep all of your Decipher components on a single VM but we don't have any specifications for such a configuration outside of the basics laid out in the sizing guide.

The assumption is that a single VM installation will not be doing high volume processing. Since that is what you desire, my thoughts are to simply increase the RAM/CPU and monitor the performance. One of the challenges however is that the automated client will consume as much resource as available. You'll simply have to see how it goes.

HA means "high availability" and DR means "disaster recovery".

jack